AI-generated structured data powers everything from automated pipelines to customer-facing APIs, but the outputs are far from guaranteed. Benchmarks show even top models struggle: GPT-4o averages around 75% on StructEval, while the best reasoning models hit only 47% on DSR-Bench. For production systems, that gap between "mostly works" and "always works" is where real incidents happen. This article lays out the testing practices that close that gap, from curating golden datasets to wiring evaluations into CI/CD.

Table of Contents

- Establish golden datasets for input-output evaluation

- Apply tiered testing for full coverage

- Leverage provider-native and schema-based validation

- Implement multi-layered guardrails and human review

- Benchmark, judge, and automate: the quality loop

- Why AI output quality demands relentless iteration, not just strict validation

- Accelerate reliable output testing with datatool.dev

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Curate golden datasets | Develop representative input-output pairs to anchor your model testing and spot regressions early. |

| Adopt a tiered testing strategy | Run tests from quick smoke checks to deep regression suites to cover all cases efficiently. |

| Validate with schemas | Combine provider-native strict modes and schema tooling for both format and meaning validation. |

| Layer safety and human review | Stack multiple guardrails and escalate tricky outputs to human experts for assurance. |

| Automate and monitor continuously | Tie evaluations into CI/CD and monitor metrics to keep quality high as models evolve. |

Establish golden datasets for input-output evaluation

With the reliability challenge in mind, it's essential to ground your evaluations in robust reference datasets. A golden dataset is a curated collection of input-output pairs that represents what correct behavior looks like for your specific use case. It's not a random sample. It's a deliberate, maintained asset.

What goes into a golden dataset? Think in three categories:

- Typical cases: The 60 to 70 percent of inputs your system sees daily. These confirm that normal operation stays stable across model updates.

- Edge cases: Inputs that sit at the boundary of your schema or domain. Empty fields, unusually long strings, ambiguous phrasing, and missing required keys all belong here.

- Adversarial cases: Inputs designed to break your prompt or confuse the model. Contradictory instructions, prompt injection attempts, and domain-shifted queries expose fragility before production does.

Golden datasets of 20 to 50 curated input-output pairs covering all three types give you a repeatable baseline for prompt testing and regression evaluation. Start with 20 pairs if you're new to this. Grow to 50 as you discover failure modes in production.

The real value shows up during regression testing. When you update a prompt, swap a model version, or change a schema, running your golden dataset immediately tells you whether behavior drifted. Without it, you're guessing.

"A golden dataset isn't just a test fixture. It's a living record of what your system is supposed to do. Teams that treat it as a one-time setup quickly lose confidence in their evaluations."

Pro Tip: Use synthetic data generation to expand your golden dataset cheaply. Prompt a capable model to produce variations of your edge cases, then manually review and label them. This lets you scale coverage without proportional human effort. Pair this with leveraging provider-native features to validate those synthetic outputs automatically.

When building your input-output pairs, be specific about the expected output format. Don't just record "a valid JSON object." Record the exact field names, types, and value constraints. Vague expectations produce vague test results.

Apply tiered testing for full coverage

After assembling golden datasets, organizing tests in logical layers ensures consistent, comprehensive checks. Not every test needs to run on every code push. Tiered testing lets you match test depth to deployment risk.

Here's how the three tiers break down:

| Tier | Name | Size | When to run |

|---|---|---|---|

| 1 | Smoke tests | 10 to 20 examples | Every commit |

| 2 | Core evaluations | 50 to 100 examples | Every PR or daily |

| 3 | Full regression | 200 to 500 examples | Pre-release or weekly |

Tiered testing with smoke tests at 10 to 20 examples, core evals at 50 to 100, and full regression at 200 to 500 should allocate roughly 40% happy path, 25% edge cases, and 15% adversarial cases across all tiers. The remaining 20% covers format-specific checks and domain-specific constraints.

Here's a practical approach to building out each tier:

- Start with smoke tests. Pick your 10 to 15 most representative happy-path cases. These run fast and catch catastrophic regressions immediately.

- Build your core eval set. Add the top edge cases you've already encountered in production. Include at least one adversarial example per major input category.

- Expand to full regression. Systematically cover every schema variant, every optional field combination, and every documented edge case. Use synthetic data to fill gaps.

- Tag your test cases. Label each case by type (happy path, edge, adversarial) and by schema feature (nested objects, arrays, nullable fields). This lets you filter and analyze failure patterns quickly.

- Review tier composition quarterly. As your model and schema evolve, some smoke tests become irrelevant and new edge cases emerge. Keep the tiers current.

Pro Tip: Automate regression test integration directly into your CI/CD pipeline. Every prompt change or model version bump should trigger at least the smoke and core eval tiers automatically. Manual test runs get skipped under deadline pressure. Automation doesn't.

The tiered approach also helps with cost management. Running 500 LLM evaluations on every commit is expensive. Running 15 smoke tests on every commit and reserving the full suite for pre-release is sustainable.

Leverage provider-native and schema-based validation

Once test coverage is in place, ensuring outputs match expected formats is the next critical step. Format failures are among the most common and most disruptive issues in production AI pipelines.

For structured outputs, use provider-native features like OpenAI's strict JSON schema mode and Anthropic's JSON mode, combined with Pydantic or Zod post-validation for semantic correctness. These tools work at different layers and you need both.

Provider-native constraints enforce structure at the generation level. OpenAI's "strict: true` mode forces the model to produce output that conforms to your JSON schema before it even reaches your application. Anthropic's strict tool use mode does the same. These reduce malformed output significantly, but they don't guarantee semantic correctness.

Post-validation tools catch what provider constraints miss:

- Pydantic (Python): Define your expected schema as a Python class. Parse the model output directly. Type errors, missing fields, and invalid values raise exceptions immediately.

- Zod (TypeScript/JavaScript): Same concept for TypeScript environments. Parse and validate in one step, with detailed error messages.

- JSON schema validation: Use a dedicated validation layer to check structure independently of your application logic. This is especially useful when your schema evolves frequently.

Common pitfalls to watch for: strict mode adds token overhead, which increases latency and cost. Some models refuse to complete a response when they can't satisfy a strict schema, which causes silent failures if you don't handle refusals explicitly. Always log refusals and treat them as test failures, not missing data.

Pro Tip: Always post-validate for meaning, not just syntax. A JSON object can be syntactically valid while containing a date field with the value "not a real date" or a confidence score of 999. Semantic validation catches these. Syntactic validation doesn't.

Implement multi-layered guardrails and human review

Validation alone isn't enough. Adding layered protections ensures quality and safety in production environments. Think of guardrails as defense in depth: each layer catches what the previous one missed.

A practical guardrail stack looks like this:

- JSON schema enforcement: The first gate. Reject any output that doesn't match the expected structure.

- Regex and allowlist checks: Validate that field values match expected patterns. Phone numbers, email addresses, and enumerated values are common targets.

- Confidence gates: If your model outputs a confidence score or you can derive one, route low-confidence outputs to human review instead of passing them downstream.

- PII and safety filters: Scan outputs for personally identifiable information or policy-violating content before they reach users or downstream systems.

- Human-in-loop review: For outputs that fail confidence gates or trigger safety filters, route to a human reviewer rather than auto-rejecting or auto-accepting.

A layered approach combining JSON schema enforcement, regex and allowlists, confidence gates, PII filters, and human-in-loop review for low-confidence outputs is the current standard for production AI quality assurance.

"The teams that ship reliable AI features aren't the ones with the most sophisticated models. They're the ones who built the most boring, reliable guardrails around average models."

When should you escalate to human review? Set explicit thresholds. If format compliance drops below 95% on a given input category, flag it for human audit. If a field value falls outside an expected range, route the record for review. Don't rely on engineers to notice problems in dashboards. Build the escalation into the pipeline.

The key is making human review efficient. Route only the cases that genuinely need it. Provide reviewers with the original input, the model output, and the specific validation failure. A reviewer who has to reconstruct context from scratch will make more errors and take longer.

Benchmark, judge, and automate: the quality loop

With guardrails in place, ongoing measurement and automated feedback complete the testing lifecycle. Testing isn't a one-time event. It's a continuous loop.

Benchmarking structured outputs requires more than accuracy scores. You need to measure format compliance rates, field-level accuracy, schema drift over time, and latency under load. Each metric tells a different part of the story.

When it comes to judging output quality, you have two main options:

| Dimension | Human judges | LLM-as-judge |

|---|---|---|

| Cost | High | Low |

| Speed | Slow | Fast |

| Consistency | Variable | High |

| Nuance | High | Medium |

| Bias | Low (with training) | Model-dependent |

| Scale | Limited | Unlimited |

A/B testing with statistical significance and LLM-as-judge for subjective quality using 1 to 5 scales works well for comparative evaluation, but you must provide human reference answers to avoid model bias. Correlation alone is insufficient; use Cohen's Kappa to measure inter-rater agreement between your automated judge and human reviewers.

Here's a step-by-step process for closing the quality loop:

- Define your metrics. Format compliance, field accuracy, and schema adherence are the minimum. Add task-specific metrics for your domain.

- Run baseline benchmarks. Before any changes, establish your current performance across all metrics.

- Integrate evals into CI/CD. Run regression suites on every prompt or model change, and monitor production metrics like format compliance above 95% and field-level output accuracy.

- Use LLM-as-judge for scale. For subjective quality dimensions, automate scoring with a judge model. Calibrate it against human annotations regularly.

- Review failures weekly. Categorize failures by type and update your golden dataset with new cases. Feed those back into the next test cycle.

- Monitor post-launch. Production monitoring is not optional. Log every output, track compliance rates in real time, and alert on degradation.

The quality loop only works if you close it. Collecting data without acting on it is just overhead.

Why AI output quality demands relentless iteration, not just strict validation

These technical measures matter. But sustaining true output quality depends on your team's testing philosophy, not just your tooling.

Here's the uncomfortable reality: even the best validation stack will miss cases in production. Models change. User behavior shifts. Edge cases you never anticipated appear at scale. Benchmarks reveal that models still struggle on complex structures and non-standard tasks despite instruction tuning, and human-like judges trade nuance for reliability in ways that automated systems can't fully replicate.

The teams that maintain high output quality over time share one trait: they treat their golden datasets as living documents. They review and revise them after every significant production incident. They add new cases when they discover new failure modes. They retire cases that no longer reflect real usage patterns.

There's also a real tradeoff between coverage and velocity. A team that insists on 500-case regression suites before every deploy will ship slowly. A team that runs only smoke tests will ship fast but break often. The right balance depends on your risk tolerance and your users' expectations. Neither extreme is correct.

Automation is essential, but complete automation is still elusive. LLM-as-judge systems are fast and scalable, but they inherit the biases of the models they're built on. Human review is slow and expensive, but it catches the nuanced failures that automated systems miss. The best QA processes use both, with practical schema enforcement as the foundation that makes both approaches more efficient.

Invest in your testing infrastructure the same way you invest in your model selection. The model gets you to 75%. The testing infrastructure gets you to 95% and keeps you there.

Accelerate reliable output testing with datatool.dev

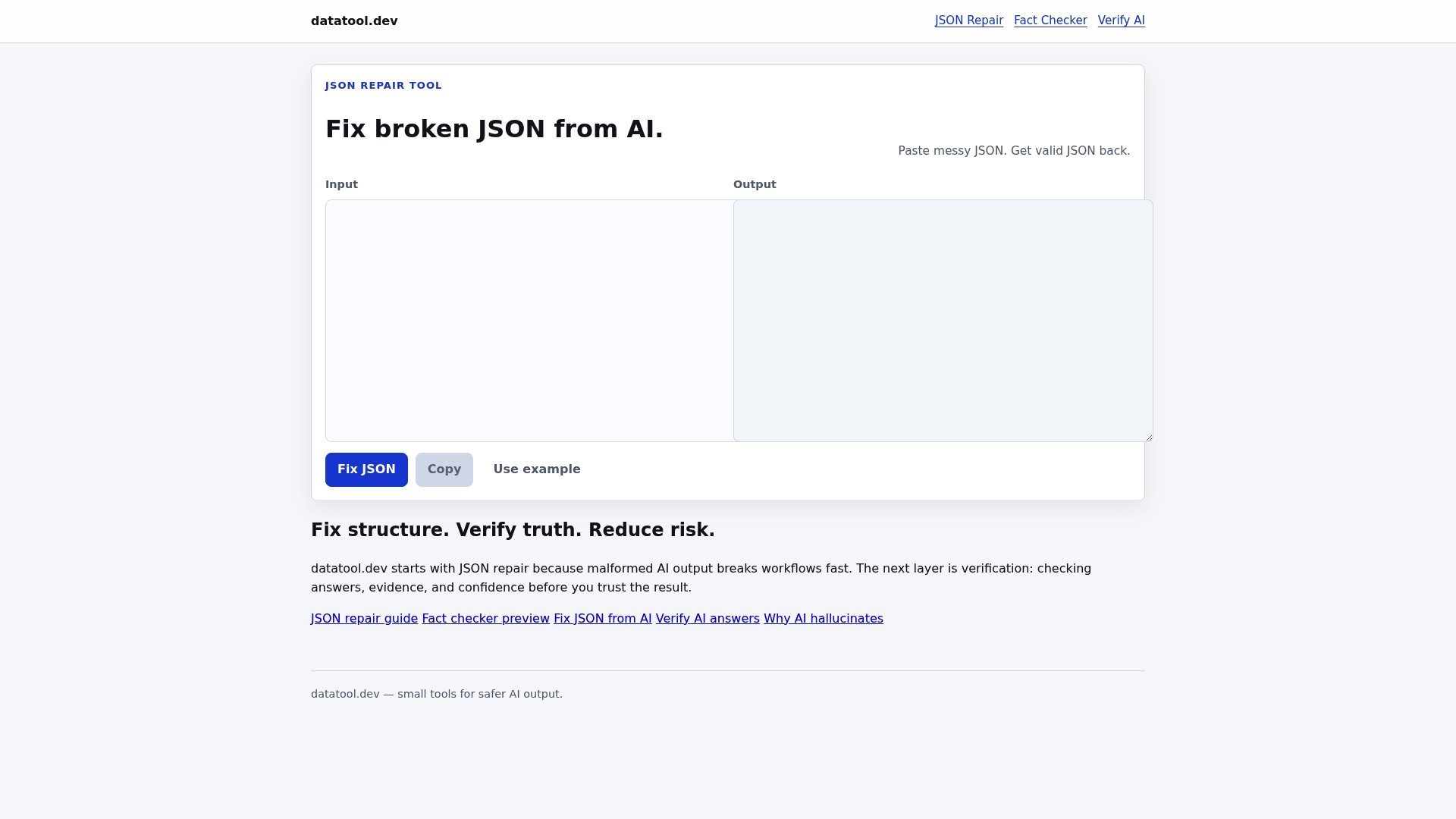

If you're ready to apply these best practices, automation tools can enhance your workflow and save significant time. datatool.dev is built specifically for the problems you've been reading about: malformed AI output, schema drift, broken JSON, and unreliable structured data from LLMs.

Start with fixing broken JSON from your existing pipelines. datatool.dev handles wrapped responses, partial objects, invalid escaping, and truncation automatically. From there, set up schema validation and regression monitoring with minimal configuration. The platform integrates directly with CI/CD workflows, so your quality checks run automatically on every model or prompt change. No manual test runs. No missed regressions. Just reliable, validated structured output at every stage of your pipeline.

Frequently asked questions

What are golden datasets and why are they important for AI testing?

Golden datasets are carefully curated example input-output pairs used to validate and benchmark the accuracy and reliability of AI models in repeatable ways. 20 to 50 curated input-output pairs covering typical, edge, and adversarial cases give you a stable baseline for detecting regressions and measuring quality over time.

How do you enforce strict output formats in AI responses?

Use provider-native constraints like OpenAI strict JSON schema or Anthropic JSON mode, combined with tools like Pydantic or Zod for semantic validation. Provider-native features like OpenAI's strict mode enforce structure at generation time, while post-validation tools catch semantic errors that syntax checks miss.

What metrics matter most for ongoing AI output quality?

Monitor format compliance rates above 95%, evaluate field-level accuracy, and regularly run regression tests with updated golden datasets. Integrating evals into CI/CD and tracking production metrics continuously gives you early warning of quality degradation before it reaches users.

Why is human review still needed if automation tools exist?

Automated validation can miss ambiguous or nuanced errors that require expert judgment, so human-in-loop review remains crucial for quality assurance. A layered approach that includes human-in-loop review for low-confidence outputs catches the edge cases that schema enforcement and regex checks simply can't handle.

How do you test for edge and adversarial cases in AI output?

Include empty, long, and intentionally misleading prompts, as well as input sets that challenge model instructions and domain boundaries. Edge cases like empty or long inputs, adversarial prompts, and domain shifts are critical for exposing fragility before production does, and synthetic data generation helps expand coverage efficiently.