Most developers assume that any large language model will reliably follow a prompt. Give it clear instructions, get compliant output. That assumption is wrong, and it costs teams real time in broken pipelines and invalid outputs. Understanding what is instruction following AI, and how it actually differs from a base LLM, is foundational knowledge for anyone building systems that depend on structured, predictable AI-generated data. Instruction-following models are distinct from base LLMs that simply predict the next token; they are trained specifically to produce outputs that directly address what you asked.

Table of Contents

- Understanding instruction-following AI models

- Navigating instruction hierarchies and conflict resolution

- Measuring instruction-following performance with benchmarks and evaluations

- Challenges and practical considerations in developing instruction-following AI

- Why instruction-following AI is less straightforward than it seems

- Enhance your AI output validation with datatool.dev

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Instruction following defined | Instruction-following AI models understand and execute natural language commands, unlike basic text generators. |

| Importance of hierarchies | AI must prioritize system and developer instructions over user or tool inputs for safe and reliable behavior. |

| Rigorous evaluation | Benchmarks like IFBench and Model Spec Evals test multiple constraints to validate true instruction adherence. |

| Challenges of partial compliance | Models often partially comply by missing requirements, necessitating automated, programmatic validation. |

| Persistent instructions needed | Consistent multi-step AI behavior requires treating developer constraints as ongoing contracts, not one-off prompts. |

Understanding instruction-following AI models

Base LLMs are text completion engines. Feed them a prompt, and they generate the most statistically likely continuation. That is useful for many things. It is not the same as executing an instruction.

Instruction-following AI models are trained to do something fundamentally different: receive a command, understand its intent, and produce output that satisfies that command. This distinction matters enormously when you are building a data pipeline that expects a JSON object with specific fields, a specific ordering, and no extra prose wrapped around it.

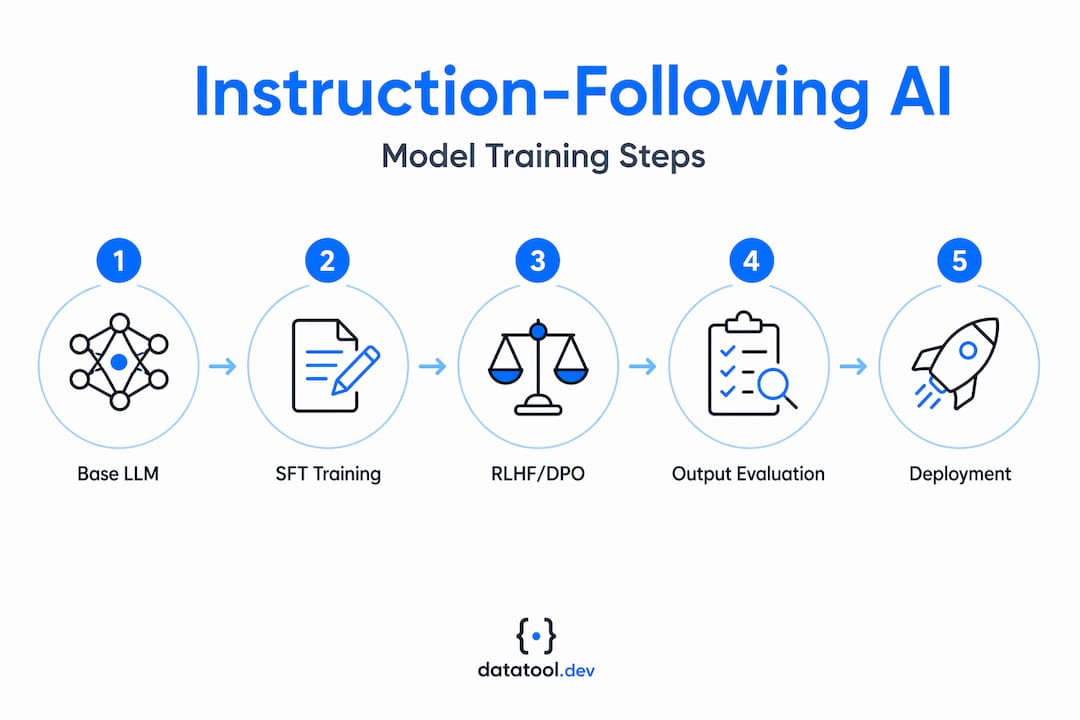

How instruction-following models are trained:

- Supervised fine-tuning (SFT): The model trains on thousands of human-written prompt-response pairs. It learns what a compliant response looks like. This establishes baseline instruction compliance.

- Reinforcement learning from human feedback (RLHF): Human raters rank model responses by quality and compliance. A reward model learns those preferences, and the policy model is updated to score higher. RLHF training involves three stages: SFT, reward model training from human preference rankings, and policy optimization using PPO.

- Direct preference optimization (DPO): A newer alternative to RLHF that skips the separate reward model. The policy learns directly from preference pairs, which simplifies the pipeline without sacrificing alignment quality.

- Policy optimization constraints: During RLHF, the model is penalized for drifting too far from its SFT baseline. This keeps it from gaming the reward signal with nonsensical but high-scoring outputs.

Instruction-following models are trained via SFT and RLHF or DPO to reliably comply with instructions, not just generate plausible text. For developers building on top of these models, understanding this training pipeline explains why model behavior changes significantly between a base checkpoint and its instruction-tuned variant. It also explains why prompt structure matters so much. The model has learned patterns of compliance, and your prompts either align with those patterns or fight against them.

For teams focused on reliable AI output handling, recognizing this distinction is step one. You are not just prompting a text generator. You are issuing instructions to a system trained to comply, and that system has real failure modes worth knowing.

Navigating instruction hierarchies and conflict resolution

Once you understand how instruction-following models are trained, a harder question emerges: what happens when instructions conflict? In production systems, this is not hypothetical. You have a system prompt, a developer-defined policy, a user request, and possibly tool outputs, all arriving in the same context window.

Models trained on instruction hierarchies handle this with a priority stack. OpenAI trains models to prioritize higher-authority instructions in the order of system, then developer, then user, then tool, following lower-priority instructions only when they do not conflict with higher-priority constraints. This directly improves safety and resistance to prompt injection attacks.

Why this matters in practice:

- A user instruction that contradicts a system-level safety policy should be ignored or refused, not executed.

- A tool output that tries to override developer-defined output formatting should not succeed.

- Malicious prompt injection embedded in retrieved documents should not hijack agent behavior.

"Models should treat instructions from higher-trust principals as constraints that cannot be overridden by lower-trust inputs, regardless of how those inputs are framed." This is the core principle behind instruction hierarchy in prompt management for production AI systems.

In practice, most developers do not think explicitly about instruction hierarchy when they write their first system prompt. They write instructions and assume the model will follow them. It usually does, until a user crafts an input that conflicts with the system prompt, or a retrieved document contains text that looks like an instruction. At that point, a model without proper hierarchy training will behave unpredictably.

The fix is not just writing better prompts. It is understanding that your system prompt needs to be authoritative, your developer policies need to be explicit, and your model needs to be one that has been trained to respect these layers. Instruction-based AI systems that ignore hierarchy are a liability in any production context.

Measuring instruction-following performance with benchmarks and evaluations

Knowing that a model is "instruction-tuned" is not enough. You need to measure how well it actually follows instructions, especially under the multi-constraint conditions that reflect real workloads.

Two evaluation frameworks are worth knowing in detail.

IFBench (Ai2): IFBench tests models on precise instruction following under multiple simultaneous constraints, using real user prompts. Tasks include factual QA, content review, summarization, and creative support. The key design choice is that prompts include several constraints at once, because that is how real instructions work. A single prompt might require a specific word count, a particular tone, a specific output format, and the inclusion of certain keywords. Meeting three out of four is a failure.

OpenAI Model Spec Evals: Automated graders score candidate responses against a rubric, producing compliance scores that are aggregated using median scoring to reduce variance. This approach enables scalable validation across large model checkpoints.

| Evaluation framework | Constraint type | Scoring method | Primary use case |

|---|---|---|---|

| IFBench (Ai2) | Multi-constraint, real prompts | Binary per constraint | Research and model comparison |

| Model Spec Evals | Rubric-based compliance | Median aggregation | Production model validation |

| Custom programmatic checks | Schema and field-level | Pass/fail per field | Pipeline-specific validation |

What good evaluation looks like in practice:

- Test against prompts with three or more simultaneous constraints.

- Score each constraint independently, not the response as a whole.

- Use automated graders for scale, but audit a sample manually.

- Track compliance rates per constraint type to identify systematic weaknesses.

Pro Tip: Do not rely on semantic similarity scores to validate instruction compliance. A response can be topically correct and still miss a required field, violate a length constraint, or use the wrong output format. Testing AI compliance requires checking each constraint independently, programmatically where possible.

For teams using automated grading pipelines, the key insight is that instruction adherence is a multi-dimensional score, not a single pass/fail. Build your evaluation accordingly.

Challenges and practical considerations in developing instruction-following AI

Evaluation frameworks reveal the gap between theory and production. Here are the failure modes you will actually encounter.

Partial compliance is the most common and the most dangerous. The model produces output that looks correct at a glance but violates one or more constraints. Partial compliance is a frequent failure mode where models omit or misorder required keywords or fields, and this can completely undermine the usefulness of an otherwise reasonable response. A JSON object missing one required field will break your downstream parser. A summary that ignores a length constraint will fail your UI layout. These are not edge cases; they are regular occurrences with current models.

Multi-step agent orchestration adds another layer of complexity. A one-shot prompt is relatively easy to validate. A multi-step agent running across dozens of turns is not. Instructions must be re-grounded with current state at each step, and persistent behavioral contracts need to be stored separately from one-off prompts to maintain consistency across the full task lifecycle.

Key challenges to design around:

- Partial compliance that passes semantic checks but fails structural ones.

- Instruction drift in long agent sessions where early constraints are forgotten.

- Context window pressure causing the model to deprioritize earlier instructions.

- Re-grounding failures after pauses, restarts, or tool call interruptions.

- Over-reliance on prompt engineering when the real fix is programmatic validation.

Pro Tip: Treat every instruction constraint as a test case. Write a programmatic check for each one. If your prompt says "return a JSON object with fields X, Y, and Z," your validator should confirm the presence and correct type of all three fields, every single time. Fuzzy semantic checks will miss the failures that matter most when handling multi-constraint prompts in production.

The practical takeaway: instruction-following AI applications require validation infrastructure, not just well-written prompts. The model is a compliance system, and like any compliance system, it needs auditing.

Why instruction-following AI is less straightforward than it seems

Here is the honest version of what most articles skip: instruction following is not a solved problem, and treating it as one is how production systems fail quietly.

The common developer mental model is that an instruction-tuned model is basically a reliable command executor. Write a clear prompt, get a compliant output. In practice, reliable instruction following depends on respecting instruction hierarchies and persistent behavioral contracts, not simply following user prompts. That distinction is frequently overlooked, and the oversight shows up as subtle bugs that are hard to trace.

What we have seen consistently is that developers invest heavily in prompt engineering and almost nothing in validation. They write detailed system prompts, test a few examples manually, and ship. Then partial compliance issues cause operational failures in production, and the debugging process is painful because the outputs look plausible. The model did not crash. It just missed a field. Or reordered a list. Or ignored a format constraint under specific input conditions.

The fix requires a mindset shift. Stop thinking of your system prompt as a configuration file and start thinking of it as a contract. Every clause in that contract needs a corresponding test. Your instruction hierarchy needs to be explicit and enforced. Your agents need persistent behavioral state, not just a long context window.

Developers who treat instruction-following AI as a compliance engineering problem, rather than a prompting problem, build systems that actually hold up. Those who treat it as a prompting problem spend a lot of time chasing dealing with partial compliance failures they did not anticipate.

The other underappreciated point: model behavior changes across versions. An instruction-following model that scores well on your validation suite today may behave differently after a provider update. Build your validation infrastructure to run continuously, not just at deployment.

Enhance your AI output validation with datatool.dev

Instruction-following AI produces structured outputs, and structured outputs break in predictable ways: missing fields, wrong types, truncated objects, schema drift, invalid escaping. Understanding the theory of instruction compliance is useful. Having tools that catch failures automatically is essential.

datatool.dev is built specifically for developers working with AI-generated structured data. It handles broken JSON, malformed outputs, partial objects, and schema violations from LLMs in real pipelines. If your instruction-following model returns something that almost matches your schema, datatool.dev finds and fixes it. Paste malformed output. Get valid, schema-compliant data back. It is the validation layer your instruction-following pipeline needs, without building it from scratch.

Frequently asked questions

What is the main difference between base large language models and instruction-following AI?

Base large language models predict the next token in a sequence without targeted compliance to any instruction, whereas instruction-following models produce outputs that directly address the command given, trained specifically for that behavior.

Why is instruction hierarchy important in AI systems?

Instruction hierarchy ensures the model respects higher-trust sources like system and developer policies over user inputs. Models follow a priority stack of system, developer, user, then tool instructions, which is critical for safety and prompt-injection resistance.

How do benchmarks like IFBench improve instruction-following models?

They expose gaps in multi-constraint compliance that single-prompt tests miss. IFBench tests models on multi-constraint instructions from real user prompts, covering tasks like summarization and content review, giving developers precise data on where models fail.

What is partial compliance, and why is it a concern for AI developers?

Partial compliance occurs when a model produces output that is topically correct but violates one or more specific constraints. Models omit or misorder required fields in these cases, which breaks downstream parsers and pipelines that depend on exact structural compliance.

How can developers ensure instruction-following AI maintains consistent behavior in multi-step or long-running tasks?

Design persistent instruction layers that act as behavioral contracts and re-ground them with current state at each step. Instructions treated as persistent contracts and re-grounded per step maintain consistency across pauses, restarts, and tool call interruptions.